|

6/16/2023 0 Comments Dd linux copy fast transfer

The SSD transfer (an iSCSI mount) is still noteworthy however. I ran dd from a hypervisor with 20+ VMs running on it so there is a fair amount of I/O delay in it. CPU processing can be offloaded to an adapter card and your ethernet wont be saturated for minutes at a time.īelow is a (low-end) Infiniband setup that cost around $500 bucks from Ebay parts (Mellanox IS5022 switch, 2 CX353A QDR cards (maybe FDR, don't remember) and (new) cables). I did think about splitting the file into chunks and running multiple nc to make use of more cores, however, someone else may have a better suggestion.Īre your endpoints physically near each other? Maybe consider a different network medium which is designed for moving buttloads of data around.

Nc hits 100% CPU on each end, I'm assuming just processing the i/o from the disk to the network. Therefor my fastest time was set by nc on it's own. When I attempted pigz on a production VHD file, pigz hit 1200% CPU load, I believe this started to become the bottleneck. However, because I dd'd an empty file for testing, I don't believe that pigz was having to work too hard. I've performed basic benchmarks using various methods, as follows: 30GB test file transfer In all cases, the network and disk i/o has plenty of capacity left, the bottleneck is pegging 1 CPU core to 100% constantly. Consider cp ‘s syntax in its simplest form. Here are all examples that demonstrate the use of the cp command. You can use cp to copy files to a directory, copy one directory to another, and copy multiple files to a single directory. The hardware on each end is 6 x enterprise grade SSDs in RAID 10, on a hardware raid controller. cp stands for copy and is, you guessed it, used to copy files and directories in Linux. I'm trying techniques such as netcat, and scp with basic arcfour cipher. Resulting in a throughput of about 170MB/sec transfer. My latest attempt took 200 minutes to transfer 2TB. Specifically, I'm moving large virtual machine image (VHD) files of 2TB between two Ubuntu hosts.

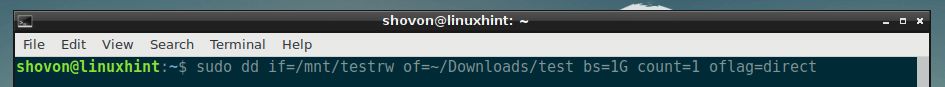

Figuring out how long the actual content is, isn't trivial, but it's important.I'm working with high end hardware, however I'm hitting cpu bottlenecks in all situations when attempting to move large amounts of data. And the size of kiosk.img ends up being much larger than necessary.Īgain, the default is for "dd" to copy the entire length of the source storage device, which could be much larger than the actual content. Like this: $ sudo dd if=/dev/disk5 of=kiosk.img bs=(content size in bytes) count=1 & syncįinding out the content size is not trivial (it depends on the source, how it was created, etc.), but the actual content might be 10% of the size of the source, which explains why all reads and writes take so long - much longer than necessary. The sync argument is very important - it flushes final writes to the device at the end of the process, to avoid having an incomplete device.Īnother approach is to find out how long the image is on the source device, and only copy that much data to the image file. The bs argument creates a block read, so it's faster. This may help: $ sudo dd if=/dev/disk5 of=kiosk.img bs=4M & sync

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed